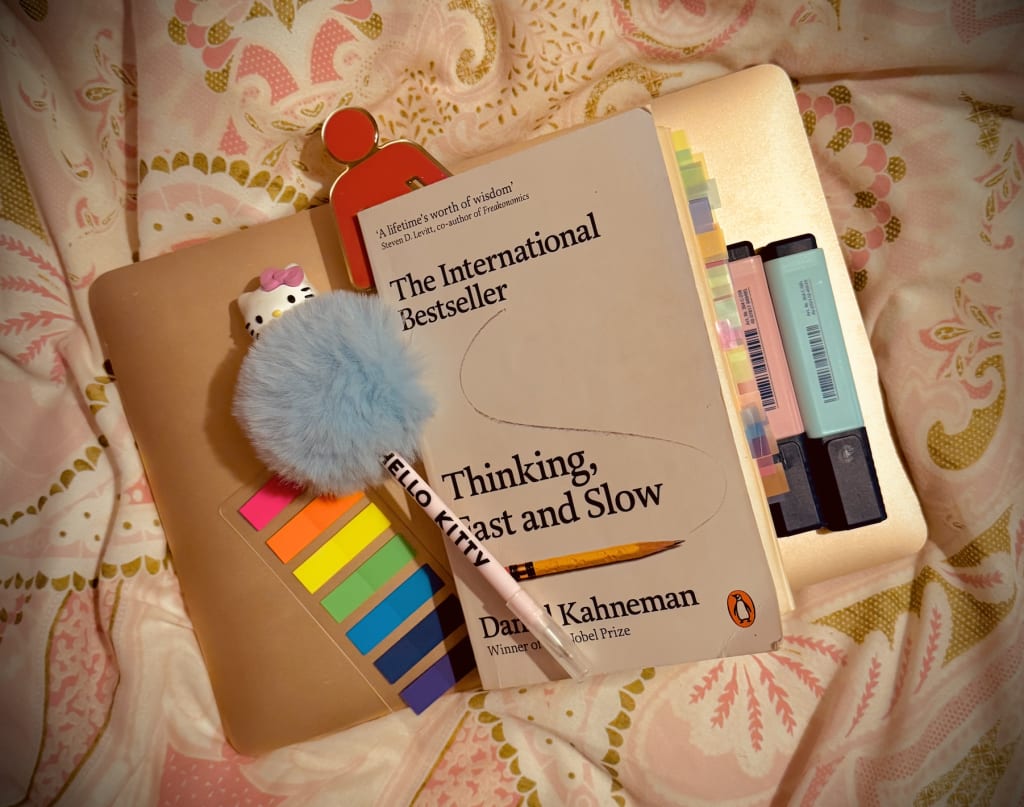

The Big Book Review: "Thinking, Fast and Slow" by Daniel Kahneman (Pt.2)

Chapters 10 to 18

Welcome back to Part 2 of our 'Big Book Review' on Thinking, Fast and Slow by Daniel Kahneman. In the previous section, we saw that Kahneman paid close attention to the two 'systems' of our thinking - one that seemed more impulsive and quickly judgemental than the other. Now, we are also turning our attention on to why the supposedly more 'critical' system in our brain may not be all its cracked up to be and perhaps, it can even be lazy. Let's dive into what this book can tell us about 'Heuristics and Biases'...

"Thinking, Fast and Slow" by Daniel Kahneman (Pt.2)

In The Law of Small Numbers, Kahneman shows us a mirror to our own biases to begin with. He gives us something to take apart in this first part which starts by stating that system 1 is "inept" when faced with "merely statistical" facts which "change the probability of outcomes but do not cause them to happen" (p.110). I was quite surprised at this but, continued to read about the evaluation of an experiment in which Kahneman asks us to think about the statistics surrounding rural residents getting kidney cancer. He gives us a broad overview about why we think the way we do when it comes to certain statistics:

"...extreme outcomes (both high and low) are more likely to be found in small than in large samples" (p.111)

Of course, if we think back to when we once drew graphs (even though it makes me shiver), we would see that there would be a majority cluster in one area the more results we gained access to - anomalies would be more likely to be present in a lower degree therefore. Thus, he states "large samples are more precise than small samples" (p.111). We already know this through basic critical thinking, but many of us cannot (until now) explain why or how. He goes on to explain that "researchers who pick too small a sample leave themselves at the mercy of sampling luck" (p.112). Which again, is obvious - but through biases this can still happen.

When I read a book called How to Change Your Mind by Michael Pollan, I saw that researchers could often get over-zealous about their own research and therefore project their own biases on to the experiment in ways that they are definitely aware of, and others that are more subconscious. Lowering the number of participants in a thought experiment in order to rely on luck to produce results may be something more subconscious than we are willing to accept. On top of this, we have the system 1 issue we faced in the previous part entitled Two Systems. Kahneman states:

"...System 1 is not prone to doubt. It suppresses ambiguity and spontaneously constructs stories that are coherent and possible. Unless the message is immediately negated, the associations that is evokes will spread as if the message were true. System 2 is capable of doubt, because it can maintain incompatible possibilities at the same time" (p.114).

I would like to make a small comment about the system 1 and 2 comments here: I don't think many people are using system 2 on social media because of the way people communicate in short bursts, without much thinking and without very much critical thinking. Social media is not a space for that. It is a space where the very worst indidivuals tends to be the loudest because of the fact they cannot think critically. I would just like everyone to think about that. I also hope this makes systems 1 and 2 easier to understand.

But the fact that we think about these things in exaggeration is a serious misfire in judgement apparently. We can exaggerate the 'coherence' and 'consistency' in what we see and thus, make mistakes judging just how random events can be (p.114-5). Kahneman talks about people as seeking coherency through a thought experiment in which we must think about which set of male and female births is the most random and which is the least. He states about human nature:

"We are pattern seekers, believers in a coherent world in which regularities (such as a series of six girls) appear not by accident but as a result of mechanical causality or of someone's intention. We do not expect to see regularity produced by a random process, and when we detect what appears to be a rule, we quickly reject the idea that the process is truly random. Random processes produce many sequences that convince people that the process is not random after all" (p.115).

Kahneman uses many different things (not just births of male and female children) to illustrate this to make sure we understand that this can be represented in all sorts of situations of the real world. One of the ways he presents this is through a basketball game. Another is through the success of business people and CEOs and whether they have 'flair' or the successes they achieve are just random.

Therefore, the biggest take-away from this section I found was that if we are presented with a small enough set of data, we should always question the reliability of it. There are far too many things that could go wrong.

Chapter 11, entitled Anchors looks at judgement once again. The main idea here is that: an initial number or value (even a random, irrelevant one) exerts a magnetic pull on later judgments. Kahneman defines the 'anchoring effect' as:

"...when people consider a particular value for an unknown quantity before estimating the quantity" (p.119).

Here, we clearly have two things happening if we are to be influenced by even irrelevant numbering or values on a particular idea. One of these things is 'priming' (which was also discusses in Part 1: Two Systems if you would like to learn more). This is where system 1 is influenced by subconscious associations. For example: thinking of “high numbers” primes you to give higher estimates even if the high numbers and high estimates are completely unrelated. This is where Kahneman tells us that people's "judgements (are) influenced by an obviously uninformative anchor" (p.120) going on to show us how that even happens.

We then see how system 2, however insufficiently, tries to adjust away from the anchor. Kahneman shows us something very realistic and physical here to depict that adjustment is a very 'effortful' thing that must be done. But even then, it isn't perfect:

"...people who are instructed to shake their head when they hear the anchor, as if they rejected it, move further from the anchor and people who nod their head show enhanced anchoring" (p.121).

The main point is that anchors work on all of us, even if we know they are completely useless. I liken this to what I call the 'Shein Problem' (which I am guilty of). I will go to a clothes shop and say to myself 'this £10 top is too expensive, I bet I can get it on Shein for cheaper" and then go and do that. Buying on Shein has therefore anchored my ability to estimate how much a top should cost in my own mind, and even though I know that is not a viable way of thinking about things, it doesn't stop me from continuing with that train of thought whenever I go out shopping.

This leads us on to Kahneman's evaluation of anchoring in the way people make decisions about their money and the way they use it (p.124). He talks about the way people will think about giving to charity if monetary suggestions are provided in comparison to when they are not provided. His conclusion seems to be that uninformative anchors can be just as useful as informative ones in this situation when it comes to handing over money to a charitable cause. Things such as percentages and large numbers can definitely make people hand over larger sums of money even if the large numbers have nothing to do with the money themselves.

From real estate to wheel of fortunes, no matter what part of the professional or intelligence (perceived or real) ladder you are on, there is absolutely no escaping the subconscious bias of an anchor - even if you are completely and utterly aware that it is absolutely meaningless to the situation at hand.

One of my favourite quotations from the book is something that on the surface, seems irrelevant but when we glance beneath the surface, Kahneman is solidifying his point about anchoring and suggestion:

"If the content of a screen saver on an irrelevant computer can affect your willingness to help strangers without you being aware of it, how free are you?" (p.128).

Maybe we are not as 'free' as we thought.

Chapter 12 is on The Science of Availability and what Kahneman will call 'availability hueristics'. It is basically about the fact that we judge how common or likely something is by how easily an example comes to mind. I found this part quite simple to understand and I already knew about the idea, Kahneman though, gives us a great insight into the 'how' and the 'why'. We start off with the idea we substitute one thing for another in some contexts:

"The availability hueristic, like other heuristics of judgement, subtitutes one question for another: you wish to estimate the size of a category or the frequency of an event, but you report an impression of the ease with which instances come to mind. Substitution of questions inevitably produces systematic errors" (p.130).

Kahneman explains to the reader that there are always going to be errors when your emphasis is on how well you can recall something serving as the basis for the judgement in question. He talks about "salient events" which draw attention such as: celebrity news. This means it comes easily to mind though it might not actually be relevant to anything. But because of the ease of how you can recall it, because of how much it is reported on and how saturated it is everywhere - you estimate it as happening more frequently than it probably does (p.130). Whereas, personal events impact our faith in various systems, negative experiences impacting it far more generalistically (p.130). Even Kahneman states that "resisting this large collection of potential availability biases is possible, but tiresome" (p.131). This suggests that complete recognition and resistance is impossible because of how 'tiresome' it would be for the brain to commit to it all the time and in every instance.

Kahneman then shows us exactly what this looks like in practice, where married couples were asked to rate their contributions to the household (we all know the women tend to do more), but in self-assessment, the men also reported doing more. But in the reports of how much the other person does, there was a dramatic decrease in how much was reported (p.132). As we go on, we see that those who are more able to retreive particular instance of being assertive from their memory are also more likely to report that they are assertive in their lives. However, it even goes on to the fact that people who were asked to frown during the task experience more cognitive strain and therefore, were more unable to do the task - resulting in a lower assertiveness reported (p.132-33).

As we build on this, Kahneman teaches us that there are more factors that were experimented and measured in terms of ease of retreival on top of not frowning and lower cognitive strain. These include but aren't limited to: "being engaged in another effortful task", in a "good mood" (though how we measure 'good mood' is something to be questioned), if they "score low on a depression scale" (which makes sense since reports of memory loss often accompany those who show signs of depression) and of course, are "made to feel powerful" (p.135). Thus, being in a better mood generates more accurate memories and on top of this, can therefore paradoxically less accurate readings on a self-assessment. For example: assertiveness.

One of the take-away points we can see in the opposite image is that public fear often focuses on memorable risks, not likely risks. We should therefore be all the more aware of how the media often skews things not in order to put forward the truth, but to make certain things seem more frequent than they actually are through emotional connection and emphasis.

Chapter 13 covers Availability, Emotion and Risk in which, similarly to the previous chapter - emotional events produce extraordinary vividness, which exaggerates their perceived likelihood. This is framed by another experiment overseen by an economist in which it was:

"...observed that protective actions [against natural disaster], whether by individuals or governments, are usually designed to be adequate to the worst disaster actually experienced" (p.137).

And now that it is said like that, it doesn't seem like such a great idea any more. When Kahneman develops this argument, he even looks at an experiment carried out on those who do not display what are considered 'appropriate emotions' before an event (this could be because of anything from purposefully impaired judgement to brain damage). He concludes that:

"An inability to be guided by a 'healthy fear' of bad consequences is a disastrous flaw" (p.139).

Of course it is. If we are not scared by our decision-making a little bit then we cannot possibly make the best decisions. It is a survival instinct towards possible dangers. People under the influence of drugs and/or alcohol have often (in popular culture at least) report feeling inhibition under the influence but, by the next day, heightened senses of anxiety and fear. This could be seen as part of that 'healthy fear' being eliminated, but the fear of possible consequence after anxieties had been purposefully eliminated making it all 'flow back' as it were. That's just my observation.

When it comes to policy though, government officials are often consulted as being the 'experts', but of course Kahneman is going to break this apart for us as well. He shows us what is clearly wrong with this assumption by connecting the point past with the point present. Experts, he states, often show the very same biases that every else does and yet, their preferences about 'perceived risk' tends to move away from what the normal or average person perceives as risk. For example: since something is not a risk to the policy maker/expert, they are less likely to pay attention to it as a likely risk to anyone else (p.140). He makes an interesting statement about this:

"Every policy question involves assumptions about human nature, in particular about the choices that people may make and the consequences of their choices for themselves and for society" (p.141).

BUT, Kahneman also admits that public outrage and panic often influences the decisions made by policy makers and experts in what is called the availability cascade. A "self-sustaining chain of events" is reported by the media and then, causes public outrage and thus leads to government action is the way this is described. This is dangerous as the media obviously (as we have already learnt) does not display risk truthfully and often only wants to grab the attention of the person viewing the headline. But, sitting back and doing nothing will result in the public becoming worried about their government not tackling the people's issues (p.142).

But one thing that this also shows us is the way we measure risk is very strange. We are either measuring it by too small of a degree, or we are giving it far too much weight and attention, overestimating it entirely. There is, as Kahneman says, never an in-between (p.143). This is how therefore, we often see the distortion of "priorities in the allocation of public resources" (p.144).

This is basically where we pay attention to things out of the ordinary more than we probably should, thus we are impacted by them emotionally and ignore the things that are perhaps more mundane but in reality, more frequent.

We then get taken to something called Tom W's Speciality which is more again, of a thought experiment than anything else. Our topic is representativeness and this is basically Kahneman showing us that when judging which category someone belongs to, we rely on similarity to stereotypes, not statistical likelihood. Again, our biases are an obstacle to truth or anything resembling truth.

Participants are given a description of the 'graduate' which is Tom W and nine different subjects. After the description, they are asked to rate them from 'most likely' to 'least likely' in terms of what they think he would study. Since he is described as not very empathetic and quite mechanical, there is an easy answer to 'most likely', which is: computer science. Kahneman states that even in the forty years since he designed the description of Tom W, the stereotypes haven't changed by much (p.146-8).

The problem with stating that Tom W must do computer science because of a shoddy personality description taken from years' before the graduate degree he sits on is that it violates base rate frequency. It is a fact there are far more humanities students than computer science students. Therefore, he is much more likely to be a humanities student regardless of how we feel about his personality (p.148).

However, judging how probable something is by the representativeness it has, according to Kahneman has "important virtues" (p.151) such as things you can usually guess via intuition. This includes the idea that "people who act friendly are in fact, friendly" (p.151). Though I don't agree with this sentiment and basically everyone has an intuitive self-centredness to their friendly motives - I do like the way it has been phrased.

Kahneman states that:

"When an incorrect intuitive judgement is made, system 1 and system 2 should both be indicted. System 1 suggested the incorrect intuition, and system 2 endorsed it and expressed it in a judgement. However, there are two possible reasons for the failure of system 2 - ignorance and laziness" (p.153).

He goes on to state that system 2 will only apply the knowledge that base rates are relevant and present when it is invested in the particular task with a special amount of effort. Apart from this, another 'sin' is an ignorance towards the quality of evidence. Even though we are told that the evidence about Tom W's personality shouldn't be trusted, we use it anyway (p.153).

In the second part of the study of 'representativeness' entitled Less is More - we get to study a character called 'Linda'. This chapter is about how people commit the conjunction fallacy, they end up believing specific statements are more likely than general ones. The experiment is this: Linda is described as bright, politically active, concerned with social issues and majored in philosophy (p.156). Participants were then given some statements which they had to choose from involving whether she is a bank teller or whether she is a feminist bank teller. Even though the former is more likely, based on the description, you are far more likely to choose the latter because of conjunction fallacies. The reason it happens, according to Kahneman, is because your system 1 functions are substituting a hard question such as: “How likely is this combination?” with an easier question such as: “Does this description match the stereotype?”

Here, representativeness overrides logical reasoning, causing us to make mistakes upon the judgement of others. This is one of the ways people form stereotypes and judgements on social media as well and, as we often find out - they can turn out to be completely wrong.

In the chapter Causes Trump Statistics we witness how Kahneman comes to the conclusion that humans prefer meaningful causal narratives over statistical truths even when the statistics dominate over the causal narratives. Much of this chapter looks at the idea of stereotyping and Kahneman starts this argument by stating:

"Stereotyping is a bad word in our culture, but in my usage it is neutral. One of the basic characteristics of system 1 that it represents categories as norms and prototypical examples...When the categories are social, these representations are called stereotypes. Some stereotypes are perniciously wrong and hostile stereotyping can have dreadful consequences, but the psychological facts cannot be avoided: stereotypes both correct and false, are how we think of categories" (p.169)

I think this is an important analysis we need to acknowledge. Stereotyping has a positive angle as well, because it lets us make an initial decision - even if there are some horrific ways they have been used in society to subjugate and victimise people. Kahneman goes on to state that neglecting stereotypes altogether can actually be just as dreadful as it is nothing but a "laudable moral position" and it satisfies the "politically correct" though probably not "scientifically defensible (p.169). The fact that more than often the idea of stereotyping possible dangers is thrown out for the moral highground seems to annoy Kahneman, but my question is: what other choice do people have when the ability to show our intuition in this way is overruled by force of law? It's all well and good saying this, but the law disallows people to say how they truly feel and so, there are many who would create false statistics in this respect, saying something they don't truly believe on both sides of the equation.

Apart from laughing at the fact that Kahneman stated that teaching psychology is "mostly a waste of time" (p.170) we get a look at bystander syndrome. Kahneman tells us of an experiment in which a man (actor) was (acting) as though he was having a stroke and waiting for people to come and help him. There were very few people initially, but after seeing some were helping, others came to help. Four out of the fifteen people came to help. A few never even looked up (p.171). I have to say that if you are a psychology student at university, you will probably not be surprised by this. I was never a psychology student at university and I'm not surprised by this. As someone who never looks up for anything when I'm out and about - bystander syndrome is a natural part of human behaviour. I am not accountable for you, you are not accountable for me.

But Kahneman likes to think that we all believe we are decent people, rushing in to help (p.171). I think he gets this upside-down. Many people would say that but are probably not thinking it at all. He can't measure what people are thinking of course, but in a case of morality, people definitely care about what others think of them and thus, report they would do something more morally brilliant than what they are actually thinking which might be more along the lines of 'I genuinely don't care.' That part hasn't been discussed.

So, is it true that according to Kahneman, in order to teach psychology to students you must "surprise them" (p.173). No, you just need to remove the morality performance. On the other hand, we also need to stop constructing random reasoning for things that happen. Human behaviour is not as reasonable as we like to think and we have already seen why. A conclusive take-away (if not my complaints about morality performance) is that whatever it is, our system 1 hates the idea of randomness. It often constructs reasons and romances where there are none to be found.

This moves us on to Regression to the Mean, which is just constructed atop of the previous chapter, so the analysis isn't too long or drawn out. It works on various ideas we have already seen to come to the conclusion that: extreme performances are usually followed by more average ones, not because of intervention, but because of statistics. One thing I find is very important in this chapter is what Kahneman found out about human behaviour, which is probably best characterised by those who spend their days arguing on social media:

"I had stumbled onto a significant fact of the human condition: the feedback to which life exposes us is perverse. Because we tend to be nicer to other people when they please us and nasty when they do not, we are statistically punished for being nice and rewarded for being nasty" (p.176).

My joke here is that everyone can think of someone who was rewarded for being nasty or apathetic as they were punished for being kind and if you can't think of the nasty person - it was probably you. Kahneman looks at this through the lens of success though. People who are successful have a mixture of talent and luck, not necessarily just one of the two, and definitely not only one. Thus, people who are less competitive and 'nicer' tend to get punished more with not being as successful.

He looks at the Sports Illustrated effect in which those who appear on the front cover of the magazine tend to perform worse in their sport the next season. This is statistical as they would have had to perform massively well to be able to appear on the magazine in the first place. Thus, according to math, they are only more likely to perform worse after appearing on the cover due to chance (p.178). So, it isn't a 'jinx'. It's just math.

Therefore, we can definitely see that the ideas of correlation and causation are not just limited to normal and average people, but are there in research as well (p.183) and so, we can draw this one conclusion statistics: sometimes improvement and deterioration are without reason, they are simply regressions to the normal or the mean.

When we are Taming Intuitive Predictions we must consider the fact that our intuitive predictions have too much of ourselves in them. They are too confident, they are too extreme, and often fluctuate quite far from the actual truth of things. According to Kahneman, they "can be made with high confidence even when they are based on nonregressive assessments of weak evidence" (p.185).

He rings this true by claiming that we can reject information we know to be false or irrelevant but it isn't something that system 1 can do on its own. Evidence presented is often compared to a relative norm that we have inside our heads, whether supported by actual statistics or not. But alongside the "flimsy evidence" we do have, we substitute the actual question with one that is easier to answer as we have already constructed the narrative in our own minds at this point (p.186-7).

Kahneman also states that it is system 2 that should correct our intuitive predictions but even then, it is hard to rid yourself of them entirely. In fact, he admits - it may worsen your quality of life, complicating it beyond measure (p.192) if you were attempt to do so. It is absolutely impossible to be unbiased and completely without intuitive judgements. Thus, system 2 has the difficult job of overriding the romanticism and irrationality presented by system 1, but at what cost we are left to wonder?

Conclusion:

Thank you for reading this section on Thinking, Fast and Slow by Daniel Kahneman 'Part 2'. Next time, I'll be back for Part 3 and I hope you've learnt a bit and discovered a bit more...

About the Creator

Annie Kapur

I am:

🙋🏽♀️ Annie

📚 Avid Reader

📝 Reviewer and Commentator

🎓 Post-Grad Millennial (M.A)

***

I have:

📖 300K+ reads on Vocal

🫶🏼 Love for reading & research

🦋/X @AnnieWithBooks

***

🏡 UK

Comments

There are no comments for this story

Be the first to respond and start the conversation.